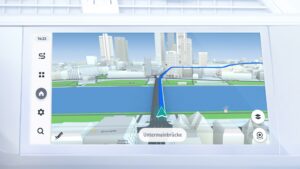

Launched novel HMI automotive platform and supporting services with large press coverage in the Las Vegas Auto Show in 2016, and exhibited by Daimler Mercedes EQ line. Enhanced mobile application offering reducing the friction of switching transportation modality, which increase adoption rate by 20% and improved NPS scores.

Led a multidisciplinary team achieving 30% shorter time to market from innovation to launch. Created and setup facilities for validation, testing and research.

Other relevant work at HERE for Mobile apps, in Car navigation, Last mile multimodality and watch integration.